ALKO-fit (object localization) (Prof. Dr. Carsten Meyer)

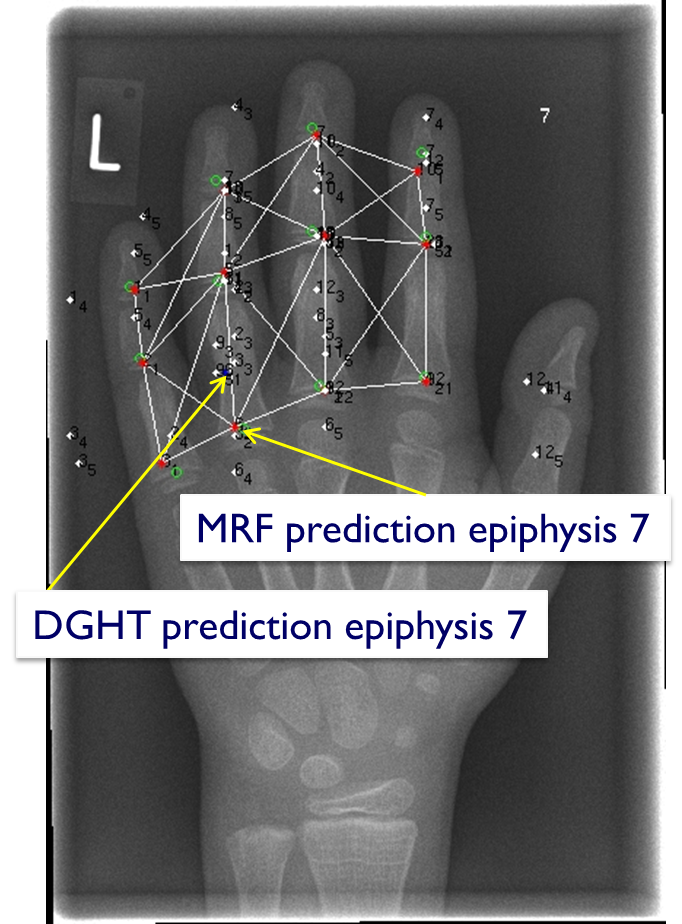

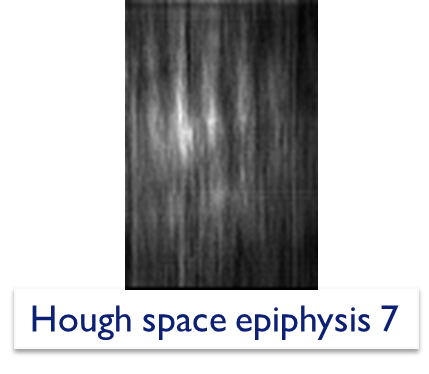

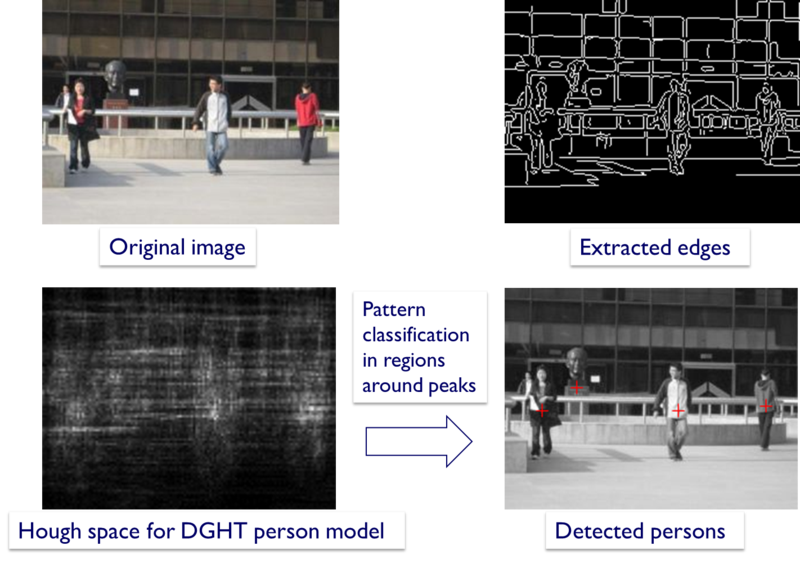

With increasing number and volume of digital data - for example in medical imaging - it is more and more important to develop algorithms which automatically analyse the images, e.g. for diagnosis and therapy purposes. A central aspect is to automatically localize and classify characteristic structures in the images, e.g. organs or landmarks. To this end, a number of algorithms have been developed, e.g. a very successful method based on the "Discriminative Generalized Hough Transform, DGHT" (project ALIAS). For a given landmark to be localized, this method automatically learns a number of characteristic image points which are especially suited to identify the target landmark. The automatically generated model is then applied to a new image and each learned model point "votes" for a location of the target landmark. In this way, possible object locations are automatically determined (see the bright spots in the figure below).

Example: DGHT localization of epiphyses (finger joints) in hand radiographies

Left image: Hough space to localize epiphysis 7

Right image: Combined DGHT-MRF localization; MRF corrects DGHT prediction of epiphysis 7

In many cases, however, a set of landmarks or structures shall be localized in a given image. Often, there is a characteristic spatial relationship between the landmarks (especially in medical imaging, consider e.g. organ systems). To this end, the DGHT-based object localization system shall be extended by graphical models ("Markov Random Fields, MRF"). Markov Random Fields allow to model the spatial relations between objects, e.g. the expected positions or distances of the individual objects. The combined system then determines the best overall configuration of the landmarks, in which the position of each landmark optimally fits the local image content and in which the spatial arrangement of landmarks is consistent. In this way, errors in the localization of individual landmarks can be avoided (see the image on the right above) and more information can be extracted from the image.

The goal of the project is to develop generic automatic learning algorithms for the combined DGHT - MRF - System and to evaluate the combined system in several medical and non-medical applications.

Example: Pedestrian localization

Project leader: Prof. Dr. Carsten Meyer

(the project ended Februar 2020)